By Pamela C. Scorzin

Abstract: In this paper, I discuss the impact of artificial intelligence (AI) on contemporary visual culture, mainly on the human (body) imagery and the forming of AI avatar design for social media and beyond, i.e., for mixed realities and the Metaverse. What kind of representations of humans does Artificial Intelligence generate? I use AI imagery as an umbrella term, including prompt engineering. What do algorithmic images created by contemporary AI image generators like Midjourney, DALL·E 2, or Stable Diffusion, among others, represent? What kind of reality do they depict? And to which ideologies and contemporary body concepts do they refer? Moreover, we can observe a visual paradox herein: The more realistic the AI images created by GANs and Diffusion models within AI image generators now appear, the less clear becomes their reference to reality and any truth content. However, what synthetic images created by intelligent algorithms depict is seen as something other than unreal and fictitious since what becomes visible refers to information minted from the metadata of vast amounts of circulating images (on the internet). Making the invisible visible and distributing it via digital platforms becomes the act of communicating with AI images that ‘in-form’ and affect their recipients by creating real resonance. The timeline of this new photo-based imaging technology points more to the future than to the present and past. Thus, AI images as meta-images can represent a different form or level of reality in a simulated photo-realistic style that functions as effective visual rhetoric for globally networked communities of the present. Moreover, in the age of cooperation and co-creation between man and machine within complex networks, the designing process can now start just with the command line prompt “/imagine” (Midjourney) – transforming the following text/ekphrases into an operative means of design/artistic productions. AI images are thus also operative images turning into a new technology-based visual language emerging from a large technological network. As networked images and meta-images, they can fabricate and fabulate the meta-human.

Algorithmic Images as Networked Images

More and more frequently, we encounter photo-realistic images of people who have never lived – who do not exist and have never been before a camera apparatus (cf. fig. 1). We see their faces and bodies in popular media as well as in the arts and design. They have been mimicked and simulated by artificial intelligence, either through a GAN (generative adversarial network) or a Diffusion model, trained with stock photography or social media images and massive image datasets from the world wide web. So-called ‘prompt images’ have also flooded social media platforms in the months since the summer of 2022 and, at the same time, instantly led to numerous media-technical and ethical debates, e.g., about their epistemic value and the degree of creativity behind their creation (cf. Kelly 2022). Generative artmaking and prompt engineering (which means finding the right words and instructions for meaningful and valuable inputs) turned into many people’s favorite pastime as well as into a buzzword on the internet over the last year. Thus, intelligent algorithms and new AI image generators (now ubiquitously available – such as DALL·E, Artbreeder, WOMBO Dream, Midjourney, or Stable Diffusion) that have the capability to turn text prompts or existing images instantly into novel, unique forms of AI imagery are emerging as assistive creative tools and mood boards for just everyone – reminding us of Joseph Beuys’ famous dictum: ‘everybody can be an artist nowadays!’.

Figure 1:

Generated with https://thispersondoesnotexist.com [accessed March 22, 2023], August 2022

Then again, these new image platforms and advanced information technologies are fueling heated debates about authenticity and (artistic) creativity with their potential to pinch artists’ and designers’ jobs, not to mention issues with authorship and copyright. The sudden technological leap in artificial intelligence image production has been made possible by recent advances in deep learning technologies, particularly natural language processing, in concert with generative adversarial networks (GANs) or Diffusion models. Anyone can easily formulate a command description or provide another image as input without major prior training. A model with ‘intelligent’ algorithms then auto-translates this input information into a cohesive computational image or even a deep fake (like the viral ‘DeepTomCruise’ on the popular social media platform TikTok, cf. Vincent 2021). The commercial use and application of these often highly photo-realistically simulated but purely synthetic digital images are, for example, not only in marketing and advertising but also in film and the game design industry.

Today, advanced AI-powered software programs such as the MetaHuman Creator are ubiquitously and readily available for this purpose and other commercial interests. The operations of this novel AI-supported image generation are also sometimes used as intelligent tools for archaeological or historical reconstruction and speculative visualization in the field of knowledge production and education or, instead, serve political activists and actors by providing convincing digital illustrations or even fakes. Moreover, large numbers of AI images can primarily be found on social media, especially on fake accounts and for various bot activities. Deepfake technology is becoming more indistinguishable from reality, raising questions about cybersecurity and human trust for the future. Even if you think you are good at analyzing faces, research shows many people need help distinguishing between photos of real faces and images that have been computer-generated. This is particularly problematic now that computer systems can create realistically looking (moving) pictures of people who do not exist. These deep fakes created with AI are now becoming widespread in everyday culture, which means people should be more aware of how they are used in marketing, advertising, entertainment, and social media since AI images are also used for malicious purposes, such as political propaganda, espionage, and information warfare. Moreover, recent research by Manos Tsakiris (2023) suggests that fake images may erode our trust in others. He found that people perceived GAN faces to be even more real-looking than genuine photos of actual people’s faces and bodies and even more trustworthy. The evolution of AI imagery is accelerating, as these synthetic images can now even be almost instantly animated, for example, into ‘personal AI assistants’ with just a click and a few minutes of processing time. Text-to-video and text-to-film generators are on the horizon, too.

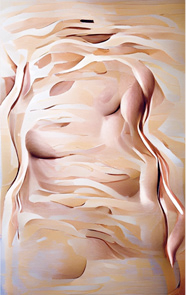

In the fine arts as well, we see various forms of digital body images generated through AI using the same technological tools and methods but often with a particular ‘StyleGAN’ component, which sometimes results in more bizarre, eerie, and uncanny, surrealistic, or ‘trippy’ body images. Examples might then look as if they were randomly collaged and digitally montaged (similar to the popular mash-up and sampling-technique before), or as if they were hallucinated and dreamed up by a vivified creative AI image machine. With this, I am obviously referring to the recurring narrative of ‘AI art’ popularized by critics’ reactions to the spectacular computer vision program DeepDream in 2015 by Alexander Mordvintsev (cf. Riesewieck/Block 2020; Zylinska 2020; Grünberger 2021; Harmsen/Kahl 2021; Hirsch et al. 2021; Manovich/Arielli 2021; Rauterberg 2021; Scorzin 2021a; 2022; 2023). AI-generated images, each created with a specific StyleGAN mimicking an artistic signature or epoch styles, thus often appear to us as a digital synthesis or as a strange hybrid of biological patterns, geological structures, and the painterly abstractions of Classical Modernism (cf. fig. 2).

Figure 2:

Levania Lehr x DALL·E 2, August 2022

Above all, however, these meta-images refer to the prevailing visual cultures, aesthetic preferences, popular tastes, successful formulas, and shared common ideas perpetuated by commercial interests and popular expressions alike of what constitutes a human body in modernity. We have been presented with such repeated expressions in the commercial advertising and on social media platforms, among others, for many decades, and new forms of AI-generated body images are just mimicking and emulating the collectively shared and curated image databases of the ‘web 2.0’ with which the algorithms have been pre-trained by developers and programmers. Thus, AI-generated body images can also be characterized as prompted meta-images that reproduce, display, and sometimes even reveal hegemonic image cultures, e.g., viral pictures of the human body in specific socio-cultural communities. At the same time, AI images can be seen as synthetic networked images since they are actualized only by prompts from a latent space of endless possibilities and image-type clusters. Moreover, on some popular platforms like Midjourney, prompting always means instantly publishing one of these possible algorithmic images and propelling a particular agenda (that relates to the motivation and intention of the prompt engineer expressed in the input texts), thus mainly contributing to the endless perpetuation of hegemonic patterns.

The Human Body in Artistic Meta-Images

Contrary to such a hegemonic notion, contemporary artists like Nick Knight, Lynn Hershman Leeson, Avital Meshi, Mal Som, Gregory Chantonsky, Harriet Davey, Boris Eldagsen, and Ivonne Thein, to name just a few, are both exploring the possibilities and experimenting with these new AI image generators as ‘smart’ tools for their artistic image production. They try to create more conceptual as well as speculative body images that break with hegemonic cliches and dominant stereotypes. As such, they may even flip and subvert prevalent, widespread narratives surrounding AI technology (such as its mythologization and mystification of human-like creators through performing robots, androids, or humanoids) and instead, for example, highlight the inherent bias of the underlying AI training data, i.e., its algorithmic distortion of reality.

Figure 3:

Jake Elwes: Still of deep fake artist from the Zizi Show 2020, courtesy by the artist

Another approach is to queer the circulating AI imagery in a striking and subversive way, as the British artist Jake Elwes does in his recent works. His web-based installation The Zizi Show (2020, fig. 3), for example, thwarts binary thinking – deeply inscribed in computer code as well as in Western visual culture in general – by calling for more diversity and gender fluidity in content and form. In his 2019 Zizi – Queering the Dataset, he aims to tackle the lack of representation and diversity in facial recognition systems’ training datasets. The multi-channel digital video was made by disrupting these systems and re-training them with the addition of 1,000 images of drag and gender-fluid faces found online. This causes the weights inside the neural network to shift away from the normative identities it was originally trained on and into a space of queerness. The Zizi series lets us peek inside the machine learning system and visualize what the neural network has (and has not) learnt. Thus, this AI-generated artwork celebrates difference and ambiguity, which invites us to reflect on bias in our data-driven society by altering or rather enriching contemporary AI imagery.

Another artistic approach is represented by the Berlin-based artist duo CROSSLUCID who trained an AI model only with artistic imagery of their own for their “Landscapes” series (since 2020), afterward published on 5,000s covers of the Slanted design magazine (cf. fig. 4) – each single magazine issue presents a singular and unique ‘AI-generated portrait’ as a kind of original artwork in print. With the co-creations of their AI (cf. Kelly 2022), they stage mesmerizing visions and fictive speculations about the human body of a near future – beyond the dialectics of biology and technology, the natural and the artificial condition, beyond gender, age, and ethnicities. Instead, they are imagining or ‘scenographing’ the current state of latent interconnectedness and (networked) connectivity in their 5,000 AI-generated portraits: its characteristic potential for continuous transition and further evolution – as an ongoing, dynamic, iterative process that hypostasizes itself in the artwork. For this, they actively employ digital glitches and blurry metamorphoses, osmotic mash-ups and dynamic remixes, synthetic mishmash and fluid morphing effects.

Figure 4:

CROSSLUCID, 5000 design magazine covers with unique AI portraits for Slanted, Vol. 37: AI -artificial intelligence (2021),

Courtesy by Lars Harmsen and Slanted Publishers, Karlsruhe

Binary Code(s)

Combining these observations, the question arises as to how the relationship between new image technology, body image, and photo-based reference to space and time can be further characterized. Digitization and automation, machine vision and machine learning, artificial neural networks and artificial intelligence, as well as their associated discourses in the contemporary techno-sciences are not only changing and challenging the modern notion of creativity and artistic genius in our postmodern culture (cf. Miller 2019). At the same time, the extensive use of ‘intelligent’ algorithms in image production is also opening up new questions about identity and its representation. The focus shifts on both, the relationship between human and non-human producers, in particular, and the relationship between constructed artifacts and transparent imitation of nature, in general. At the same time, increasingly hybrid networks of human and non-human actors force a co-existence and co-creativity of man and ‘smart’ machines, while also putting creative autonomy and authorship more and more at stake. These technically induced collaborations manifest themselves in the new algorithmized aesthetics of digital modernity. Simultaneously, they visibly turn our attention away from a purely anthropocentric (world) view and question the autonomous, singularly creative individual and the subjective, personal author.

Contemporary media artists such as AI art pioneer Mario Klingemann or fashion photographer Nick Knight (and many others like Memo Atken, Jake Elwes, Trevor Paglen, Anna Ridler, and Pierre Huyghe, to name just a few) reflect in their AI Art in similar but specific ways the current influence of artificial intelligence on our digital image production. Their interests lie in experimentation and exploration instead of exploitation or fake production. Seen as a whole, this art production is increasingly turning into a hierarchy-free cooperation and creative collaboration of humans and intelligent machines within complex networks. AI-driven digital productions, however, often remain bound to the combinatory, aleatory, and iterative characteristics of a technologically automated design process. Can this technological operation result in (subjective) art representing reality, or does it merely visualize knowledge based on mathematical equations and stochastic probability? What kind of new body notions could ultimately be envisioned in AI art that synthesizes and actualizes image clusters of circulating body images? Which concepts and visuals arise in the related – often over-sexualized and idolized – AI-powered avatar design for social media and computer games? Here, art and design certainly allow for being more speculative, even futuristic, as a postdigital avant-garde.

In the remainder of this essay, I suggest an initial definition, describing the algorithmized aesthetics of these novel, AI co-created digital body images as virtual, variable, and viable. It conjures up new fluid and flexible identities that may be both transspecies and boldly protrude super sapiens. For this, however, we need a brief look back and a reminder: Since the 1990s, we have increasingly come to understand biological DNA as a kind of biochemical code comparable to a program of algorithms, enabling the natural body to become a freely modifiable organism using new techniques such as genome editing or CRISPR-Cas9. Blockbuster movies such as Jurassic Park (Steven Spielberg, 1993) and The Matrix (The Wachowskis, 1999) put this coded constitution of biological organisms and digital images into a then spectacular analogy for the screens. Even today, biological organisms like plants, flowers, animals, and human bodies – or even fantastic, hybrid beings combined with all the former – continue to serve many AI artists as prominent motifs and subtle references for their algorithmized works. Increasingly, however, dynamic hybrid networks generate and create synthesized images of the human body in a double sense: The notion of seeing homo sapiens as an autonomous subjective, singularly capable of creativity and intelligence is currently eroded by trans- and post-humanist concepts, which instead refer to its general (inter-)connectedness and networked connectivity – ecologically, culturally, and technologically. Moreover,

the crossovers between cybernetics and environmental sciences, molecular biology and informatics, neurology and robotics expand our knowledge of the human being and lead, at the same time, to the questioning of the singularity and centrality of the Anthropos in all his/her dimensions – perception, cognition, agency and creativity. Media theory, digital studies and the philosophy of technology have been the source of a fundamental anthropological questioning […] by showing the co-constitution of the human and the technical environment, namely concerning cognition and other superior capabilities of the human spirit. They are joined today by ecological thinking in the claim of a post-humanist turn of the humanities […]. Likewise, discourses and practices around digital arts moved beyond the aim of establishing a mere genealogy and procedural field to think how the digital is penetrating aesthetic, affective and political experience, as well as creative and collaborative practices in ways more fundamental or also more indirect (Teuchmann 2023: n. pag.).

The U.S. media artist Lynn Hershman Leeson, for example, has been working for decades with the concept of a ‘transgenic cyborg’ (cf. fig. 5) in order to explore this cross-over between nature and digitalism. In her work, dynamic hybrid network structures formed by human and non-human actors, by biological, inorganic, and technological entities as creative co-agents, are portrayed as increasingly determining our life. While comprehensive and highly complex, technological networks are perceived as an epitome of the ‘digitization’ of societies, which goes hand in hand with constant transformation and disruption for many; developers, coders, and artists are experimenting with catalysts such as the quantum computer or so-called ‘biomedia’, which also call for a new, no longer purely anthropocentric perspective and a novel concept of creativity that is no longer the sole domain of the human.

Figure 5:

Lynn Hershman Leeson: Transgenic Cyborg (2000),

courtesy by the artist

The most recent developments in artificial intelligence and highly effective quantum computing are becoming fundamental game-changers in this context. They produce new kinds of powerful assistive creative tools for visualization processes in art and design. With the help of these smart advanced technologies, an enhanced and altered human nature is also on the horizon, which is sometimes promoted by tech companies and laboratories as the evolution of homo sapiens from the cyborg to the super sapiens (cf. Supersapiens, the Rise of the Mind, 2017, a film by writer-director Markus Mooslechner). While our physical activities were taken over and enhanced by (mechanical) machines during the Industrial Revolution, now, in the course of the comprehensive digitization of society, more demanding mental, cognitive, and even creative activities are increasingly getting automated and enhanced as well. AI-supported information technologies now rival the natural abilities and cultural skills of homo sapiens: Creative artifacts and technical inventions can already evolve autonomously, not only when AI software writes new programs semi-automatically – but also when it produces AI images of humans beyond the human imagination and without the ingenuity or participation of humans.

Like the apparatus-driven technology of photography in the mid-nineteenth century, which fundamentally mechanized image production but was regarded as a kind of ‘natural magic’ in its early days (cf. photography pioneer William Henry Fox Talbot, 1800-1877), artificial intelligence today also appears to many as a mysterious technology that is increasingly intimidating or even humiliating homo sapiens. The impression of magic, meanwhile, is reinforced by new text-to-image-generators such as DALL·E, Midjourney, or Stable Diffusion, for which only a working text formula must be found like some ‘magic spell’. Once again, audiences gaze in awe and wonder at hitherto unimagined and unseen automatic productions that form seemingly out of nothing from scratch, virtually at the summons of the machine (i.e., by just inputting prompts). However, ‘conjuring’ always means playing with illusion. Behind the new digital image productions, there are, first and foremost, algebraic operations and stochastic calculations processing image clusters – even if they are, in the end, presented by creativly staging and performing anthropomorphic machines like androids or humanoid robots, which promote the illusion of subjectivity and sensibility, autonomous creativity, and authorship. At present, they just effectively mimic being the sole and soulful creators in the spotlight on the various media stages.

AI and Creativity in the Arts

Since the end of the 20th century, one of the origins of the AI revolutions, Silicon Valley, has produced new (symbolic) visions of the world, both figuratively and literally, that have a global impact already: Its dreams and programmed predilections are reflected in the new technology-driven aesthetics of digital arts and computer-aided design. Contemporary artists designing creative machines visibly use or critically reflect these inventions and new advanced computer technologies, either as the latest tools or as collaborative smart agents. Artificial intelligence, seen in this context, can appear as either an objective technology or a subjective co-creator. In recent years, for example, intelligent algorithms and artificial neural networks have not only enabled ‘intelligent’ machines such as (humanoid) robots to become (seemingly autonomously) creative but have also been employed to perform their exceptional abilities on various media stages. These ‘creative AI performers’ currently include, for example, the ‘ultra-realistic humanoid artist robot’ AI-Da, on which its Oxford-based creator and gallerist Aidan Meller (2019) emphasizes that

[t]oday, a dominant opinion is that art is created by the human, for other humans. This has not always been the case. The ancient Greeks felt art and creativity came from the Gods. Inspiration was divine inspiration. Today, a dominant mind-set is that of humanism, where art is an entirely human affair, stemming from human agency. However, current thinking suggests we are edging away from humanism, into a time where machines and algorithms influence our behaviour to a point where our ‘agency’ isn’t just our own. It is starting to get outsourced to the decisions and suggestions of algorithms, and complete human autonomy starts to look less robust. AI-Da creates art, because art no longer has to be restrained by the requirement of human agency alone (Meller 2019: n.pag.; original emphasis).

Thus, according to Margaret Boden’s prominent definition of creativity (cf. Boden 2004; 2010), such technologies created by a team of developers, coders, and engineers, along with artists and gallerists – such as AI-Da, Hiroshi Ishiguro’s Erica, or Hanson Robotics’ Sophia – are actually and ‘autonomously’ creative, albeit by different standards than those we employ for human producers, and with the subtle difference that they no longer create according to nature, but on the meta-level through the dataization and mediatization of the human world. What these anthropomorphic AI machines produce and exhibit is, however, first and foremost synthetic pictures of particular prevailing image cultures.

Figure 6:

Refik Anadol, Unsupervised, Installation view, The Museum of Modern Art, New York 2022,

November 19, 2022 – March 5, 2023, © Refik Anadol Studio, courtesy by the artist

Refik Anadol’s fluid digital abstractionism (cf. fig. 6), with its mesmerizing 3D AI data pigmentations, for example, is, contrarily to the primarily figurative imagery of those creative robot-artists, deeply rooted in the historic abstract tradition and its legacies on the one hand, but also boldly crosses the boundaries between computer art, communication design (data visualization and data modeling), and advanced technologies on the other. It also paves the way for impressive multi-sensory experiences that allow their poly-cultural audiences to see the unseen and make the invisible visible; even more, Anadol’s work visualizes a machine learning and AI-based non-human understanding of our world and produces co-creative knowledge between man and machine. The digital artist uses AI models to turn data, as a collection of discrete values that convey information describing quantity, quality, fact, statistics, and other basic units of meaning, into an experiential artwork. New hardware and software developments thus enable hitherto unseen algorithmic aesthetics (beyond the human imagination) and novel representations of our (surrounding) world from the perspective of mechanized machine memory, based primarily on operations of its computational data and the collected information.

Probably most impressively, however, we can experience how these new computational co-creators presently co-design novel digitized bodies, body images, or even entire new worlds to live in, in popular CGI fantasy genres of computer games and sci-fi movies. Meanwhile, many synthetic bodies (‘synthients’) and social chatbots are already on the move in our visual culture as well, such as deceptively life-like characters presented with subjective world views, such as the model avatars of the agency The Digiitals or prominent virtual influencers such as Miquela Sousa (or Lil Miquela, a fictional American character and ‘AI robot’ created by Trevor McFedries and Sara DeCou) on social media (cf. Scorzin 2021b; 2021c). They are advancing to become social co-existents, e.g., ‘friends’ for their followers through their attractive, fashionable appearances and communicative behavior. As seemingly individual characters with flexible and fluid identities and synthetic narratives (cf. Lamerichs 2019), they are also digital depictions and virtual forerunners of a trans- and post-humanist world. For, in addition to transformations of body images in the form of the new hybrid beings described above, AI also feeds into a desire for immortality by helping to create timeless, never-aging virtual bodies and flexible fashionable avatar designs (cf. fig. 7) – thus, virtual bodies that remain always fit and optimized for a culture of ruling performativity and a (self-)staging on the fully mediatized stages of our (internet) life.

Figure 7:

AI-powered avatar design for Mixed Realities and the Metaverse, 2023

What if, soon, only fluid and flexible AI avatars will be entertaining us in the Metaverse? Some say the AI musicians are ‘coming for your playlists’, and they are already mimicking your favorite artists in music videos, too, like Travis Bott (cf. fig. 8). ‘He’ is an AI-powered musician inspired by Travis Scott, the famous US rapper, producer, and songwriter responsible for Sicko Mode. In 2019, Travis Bott had a new title and accompanying video that featured visual representations of the virtual singer himself – who is, not surprisingly, a pretty Scott look-alike, his digital twin, partly imagined and animated by artificial intelligence. The AI-generated song and its lyrics are titled Jack Park Canny Dope Man; for its audiences, Travis Bott is eerily close in aesthetics, lyrics, and sound design to the original source of inspiration as its genuine model. Responsible for this art project is the US-based, tech-driven creative company Space150. The AI model used to compose and visualize the song learned the characteristic lyrics and signature-style melodies of some of Scott’s most commercially successful compositions before creating its own take, which could be seen as either a fake or a homage. Self-referential glitches in the music video at least indicate that this production was thoroughly processed by smart algorithms. It gives us a glimpse of the future in which everyone might be easily able to prompt a video or a film with the help of text-to-image (or text-to-other-media) AI generators. Who then needs real, i.e., human entertainers?

Figure 8:

Screengrab from the Travis Bott music video (Travis Bott 2020).

Conclusions and Outlook

Well-entertained audiences are also happy to consume digital body images as their new fashionable skins, in AI art installations, in stylish interfaces for Mixed Reality, or in immersive computer games – before the advanced technology will become perhaps even more body-invasive and create the often-envisioned and fantasied new transhumanist bodies of the future. AI-generated digital body images, often over-sexualized and idolized (as, for example, in the current 3D characters and avatar designs for social media and the forthcoming Metaverse created with AI-driven apps), thus mark only an intermediate evolutionary stage to the transient transhumanist human body. They could also be seen in a more pessimistic view, leading into dystopian scenarios, as in Steven Spielberg’s visionary meta-movie Ready Player One (2018).

As such, in the age of technologically networked cooperation and co-creation between man and machine, the design process increasingly starts with something like the prompt command line “/imagine”, transforming mere texts/ekphrases into an operative means of AI-powered design and artistic production. Thus, generative AI can function both as a technical muse (cf. fig. 9) and inspiration or as a non-human collaborator and co-creator of new, meaningful and valuable artifacts from its inherent technical feedback loops that are relatable and comprehensible – but simultaneously completely unexpected and sometimes beyond any human imagination and intention.

At the same time, synthetic AI images are a new technology-driven visual language (cf. Scorzin 2023) that many of us humans have yet to study and learn better. In psychology, reality monitoring identifies whether something is coming from the external world or from within our brains’ biological neural networks. Such an objective vs. subjective dualism seems increasingly outdated through machine vision and machine learning. The advance of AI technologies that can now produce meta-images of meta-humans, highly photo-realistic to human eyes, means, soon, ‘reality monitoring’ must be based on information other than our sensory judgments – maybe even primarily on powerful machine vision and AI knowledge.

Figure 9:

Levania Lehr x DALL·E 2: Fictitious Self-Portrait, October 2022

Bibliography

Boden, Margaret: The Creative Mind: Myths and Mechanisms. 2nd edition. London [Routledge] 2004 [1990]

Boden, Margaret: Creativity and Art: Three Roads of Surprise. Oxford [Oxford University Press] 2010

Grünberger, Christoph: The Age of Data: Embracing Algorithms in Art & Design. Zürich [Niggli Verlag] 2021

Harmsen, Lars; Julia Kahl (eds.): Slanted Magazine #37: AI – Artificial Intelligence. Karlsruhe [Slanted Publishers] 2021

Kelly, Kevin: Picture Limitless Creativity at Your Fingertips. In: Wired. November 17, 2022; https://www.wired.com/story/picture-limitless-creativity-AI-image-generators/ [accessed February 16, 2023]

Lamerichs, Nicolle: Characters of the Future: Machine Learning, Data and Personality. In: IMAGE: The Interdisciplinary Journal of Image Sciences. Special Issue Recontextualizing Characters, 29, 2019, pp. 98-117

Meller, Aidan: AI-Da. In: AI-da robot. 2019. https://www.AI-darobot.com [accessed February 16, 2023]

Miller, Arthur I.: The Artist in the Machine: The World of AI-Powered Creativity. Cambridge, MA [MIT Press] 2019

Manovich, Lev; Emanuele Arielli: Artificial Aesthetics: A Critical Guide to AI, Media and Design. In: Manovich.net. December 15, 2021. http://manovich.net/index.php/projects/artificial-aesthetics [accessed February 16, 2023]

Rauterberg, Hanno: Die Kunst der Zukunft: Über den Traum der kreativen Maschine. Berlin [Suhrkamp Verlag] 2021

Riesewieck, Moritz; Hans Block: Die digitale Seele: Unsterblich werden im Zeitalter Künstlicher Intelligenz. München [Goldmann Verlag] 2020

Scorzin, Pamela C. (ed.): KUNSTFORUM International Vol. 278: AI ART. Kann KI Kunst? Neue Positionen und technisierte Ästhetiken. Köln [KUNSTFORUM International] 2021a

Scorzin, Pamela C.: As Real as Rihanna? The Curious Case of Miquela Sousa. In: Slanted Magazine, 37: AI – Artificial Intelligence, 2021b, pp. 100-105

Scorzin, Pamela C.: More Human than Human: Digital Dolls on Social Media. In: Insa Fooken; Jana Mikota (eds.): Puppen als Seelenverwandte – biographische Spuren von Puppen in Kunst, Literatur, Werk und Darstellung. Dolls/Puppets as Soulmates – Biographical Traces of Dolls/Puppets in Art, Literature, Work and Performance. Siegen [UP] 2021c, pp. 157-166

Scorzin, Pamela C.: Ko-Kreation und Evolution in der AI ART – am Beispiel von Pierre Huyghes ‘Mental Image’-Installationen. In: Kunsttexte, Festausgabe (Sektion Kunst Design Alltag), 1, 2022, pp. 1-7. https://journals.ub.uni-heidelberg.de/index.php/kunsttexte/article/view/88240?fbclid=IwAR3m_R3QMoCmtqByecY86AsQ68hdst1hvO0K6y3Ye2uBzhB19ptjyAcWWis [accessed February 16, 2023]

Scorzin, Pamela C.: Kreativität, Kunst und KI: Zur algorithmisierten Ästhetik der AI ART. In: Lars Grabbe; Christiane Wagner; Tobias Held (eds.): Kunst, Design und die “technisierte Ästhetik”. Marburg [Büchner Verlag] 2023, pp. 232-249

Hirsch, Andreas J.; Markus Jandl; Gerfried Stocker (eds.): The Practice of Art and AI: European ARTificial Intelligence Lab. Linz [Hatje Cantz] 2021

Teuchmann, Philipp: Arts and Humanities in Digital Transition (Lisbon, 6-7 Jul 23). In: ArtHist.Net. January 7, 2023. https://arthist.net/archive/38260 [accessed February 16, 2023]

Travis Bott: Travis Bott – JACK PARK CANNY DOPE MAN. Video on YouTube. December 20, 2020. https://www.youtube.com/watch?v=3UwLhqcZqxc [accessed February 16, 2023]

Tsakiris, Manos: Deepfakes: Faces Created by AI Now Look More Real than Genuine Photos. In: Singularity Hub. January 16, 2023. https://singularityhub.com/2023/01/26/deepfakes-faces-created-by-AI-now-look-more-real-than-genuine-photos/ [accessed January 2023]

Vincent, James: Tom Cruise Deepfake Creator Says Public Shouldn’t be Worried about ‘One-Click Fakes’. In: The Verge. March 5, 2021. https://www.theverge.com/2021/3/5/22314980/tom-cruise-deepfake-tiktok-videos-AI-impersonator-chris-ume-miles-fisher [accessed February 16, 2023]

Zylinska, Joanna: AI Art. Machine Visions and Warped Dreams. London [Open Humanities Press] 2020. http://openhumanitiespress.org/books/download/Zylinska_2020_AI-Art.pdf [accessed February 16, 2023]

About this article

Copyright

This article is distributed under Creative Commons Atrribution 4.0 International (CC BY 4.0). You are free to share and redistribute the material in any medium or format. The licensor cannot revoke these freedoms as long as you follow the license terms. You must however give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use. You may not apply legal terms or technological measures that legally restrict others from doing anything the license permits. More Information under https://creativecommons.org/licenses/by/4.0/deed.en.

Citation

Pamela C. Scorzin: AI Body Images and the Meta-Human. On the Rise of AI-generated Avatars for Mixed Realities and the Metaverse. In: IMAGE. Zeitschrift für interdisziplinäre Bildwissenschaft, Band 37, 19. Jg., (1)2023, S. 179-194

ISSN

1614-0885

DOI

10.1453/1614-0885-1-2023-15470

First published online

Mai/2023